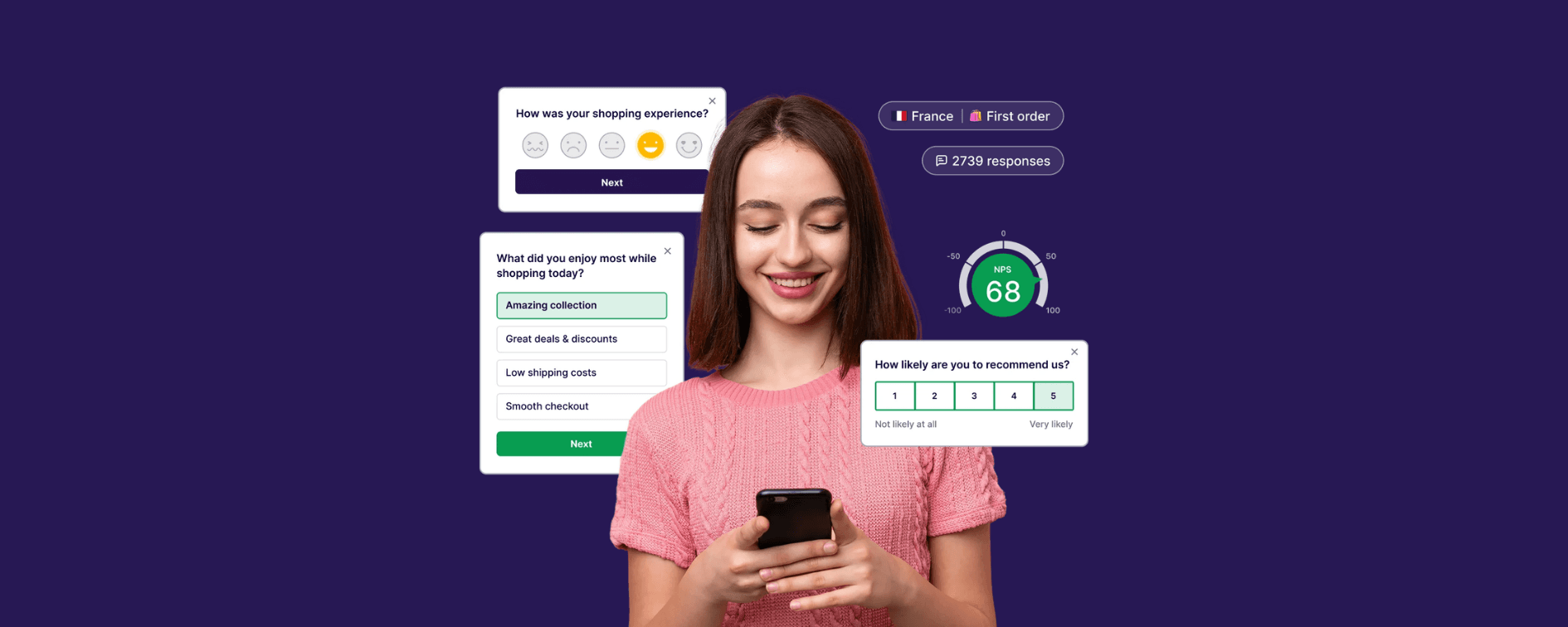

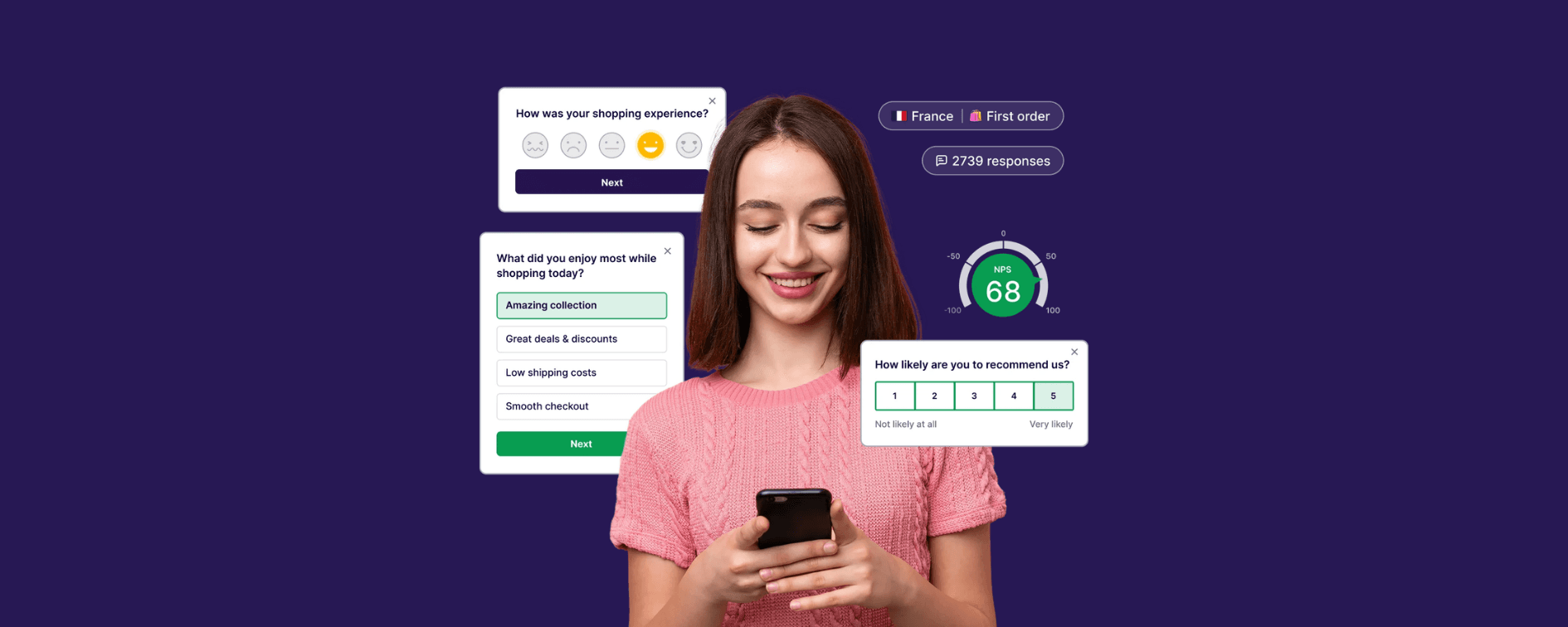

VWO Pulse: When Your Users Tell You Exactly What to Test Next

VWO Pulse brings survey feedback into the same platform where your experiments run. Here's how the closed loop works and why it changes what gets tested.

Companies that invest in Conversion Rate Optimisation (CRO) and experimentation programs see big returns. According to industry research, businesses using CRO tools often generate an average 223% return on investment.

Yet despite this promise, many teams struggle to gain traction even after buying popular experimentation tools like Optimizely, VWO, AB Tasty or Dynamic Yield.

Here’s a quick checklist for a successful experimentation program:

A clear experimentation roadmap (at least quarterly plans)

Ownership and accountability (somebody in charge of CRO, not as an added responsibility)

Data-driven hypotheses (backed by data)

Consistent testing cadence

Involving the right roles in the program: data, UI/UX, marketing, ecommerce, and development

Read on to discover the four biggest mistakes CRO teams make.

Here’s what usually happens:

The experimentation tool is rolled out with great expectations.

Everyone is supposed to use it, but no one owns outcomes.

Tests aren’t planned based on real insights or goals.

Creativity takes over and intuition replaces data.

You’ve spent a few months enthusiastically attending demos and talking to product teams, then finally selected an experimentation platform you’re happy with. You were told that this tool should be used by everyone in your team, and the more you test, the better.

But who’s actually in charge?

The data team?

The UI/UX team?

The ecommerce team?

The marketing team? Or the development team?

Weeks went by. Nothing happened.

Your consultant gave you a nudge once in a while, presenting a list of great experimentation ideas and offering support if you need. You thanked them politely, said you’d get on to that as soon as possible.

But before you knew it, weeks went by again.

This matters because experiments without clear direction or ownership rarely deliver value and that’s a big reason why so many companies give up on CRO altogether. In fact, 80% of our clients stopped testing because they only ran 4 to 5 experiments over six months and didn’t see meaningful movement.

CRO is all about making micro-improvements, which compound over time to bring sustainable growth. Without a strong plan or a designated driver (DD) to lead testing strategy, experimentation becomes a chore instead of a strategic engine.

A CRO program needs quite some work to become effective. Hypotheses should be backed by data.

We all love shiny new animation on the dresses category page so when users hover over a product card, they see a model with that dress on instead of just the product image. Or we wonder if the add to cart button is higher up on the product detail page, maybe with a brighter orange instead of that boring grey shade now, would users purchase more?

These are great ideas and valid questions.

But what if Google Analytics shows that 90% of your users are on mobile, meaning they’ll never see that hover effect? Or what if your add-to-cart rate is already above average, and the real drop-off happens later in the checkout flow? So maybe it’s better to test sending an email or reminding those returned users with a popup showing items abandoned in their cart selling fast or having a price drop.

But guess what? A/B testing without data-backed hypotheses is like shooting in the dark. You might get lucky, but most likely you’ll waste months with inconclusive results.

Solution: Assign a CRO owner, set quarterly goals, map your tests to business priorities, and build a hypothesis pipeline using analytics and customer insights.

Around 77% of businesses run A/B tests, but many aren’t structured for high success rates. Only one in every seven A/B tests is a wining test. The average industry success rate for tests (where a variant beats control) sits much lower: roughly 12–15%.

Many teams view experimentation like a magic growth lever, run a few tests and boom your conversion rate explodes. Unfortunately, that’s not how it works.

Let’s be real: improving conversions by 20–30% with three random tests is not typical, especially if you’re starting from an average conversion base.

That means most tests won’t move the needle, at least not dramatically. What makes the difference is running high-velocity, well-prioritised experiments and accumulating learnings over time.

Solution: Treat CRO as a long-term program, not a quick fix. Craft a Goal Tree for your organisation, keeping track of not just the big KPIs like Conversion Rate or Revenue but also the micro-conversions also, which should be prime target for your experiments when you’re starting out. To increase conversion rate, scroll depth, whether users check out the Reviews section, whether users are watching product videos on your product pages - they all matter as well!

Good experimentation ideas don’t come from gut feelings or design preference. They come from deep insights: analytics, user behaviour data, qualitative research, that show why users behave the way they do and what might move the metric you care about.

Here’s where many teams fall short:

Testing random visual tweaks

Hypotheses based on “sounds good” rather than evidence

Ignoring mobile user behaviour or segment variations

Industry experts emphasise that a test must be grounded in a clear hypothesis with expected outcomes. Otherwise, results are meaningless and hard to interpret. Even if a change moves the metric, without a solid hypothesis it’s hard to know why it worked, meaning you can’t repeat the success elsewhere.

Solution: Build each test around a clear hypothesis:

If we change X → we expect outcome Y because our data shows Z.

Use analytics, heatmaps, customer feedback and funnel analysis to fuel this prioritisation, not opinions.

CRO programs are marathons, not sprints. It’s easy to start strong, run a few tests, see some results, but then enthusiasm fades when:

Tests take longer than expected to reach statistical significance

Variants don’t deliver instant wins

Teams revert to business-as-usual work

Some experiments can take weeks to reach statistical confidence, and that’s if you have sufficient traffic. This slow cycle can kill momentum, especially in fast-moving teams that want quick results.

Solution:

Start with high-impact, high-traffic areas

Use frameworks like ICE (Impact × Confidence × Ease) to score ideas

Publish learnings publicly across your organisation. Inconclusive experiments matter too if you read the insights right. Maybe if you look at the CTR for just organic search traffic, mobile traffic, or some sliced and diced segments of your audience, the data will be much more meaningful. This builds a learning culture and keeps CRO alive.

CRO isn’t just A/B testing. A/B testing is a tool, but CRO is a process, from research to hypothesis to execution to measurement and learning. Teams that focus only on running tests miss the bigger opportunity: continuously improving customer experience based on insights over time. A strong CRO program ties experiments to business goals, customer journey gaps, long-term retention outcomes and strategic prioritisation.

That’s why companies with mature experimentation programs outperform those that treat testing as a one-off tactic.

At Paved Digital, we help teams build the right foundations before scaling experimentation. Our 3-month CRO & Experimentation Accelerator is designed to get your program working properly.

What our 3-month CRO Accelerator helps you do:

Establish a clear CRO roadmap aligned to business goals

Build data-driven hypotheses (not opinion-led tests)

Set up ownership, governance, and testing cadence

Prioritise experiments that impact conversion, revenue, and retention

Create momentum before committing to a full-scale experimentation program

This accelerator gives your team the structure, confidence, and repeatable process needed to treat CRO as a long-term growth capability, not a short-term experiment.

Want to learn more about CRO or need hands-on experimentation support? Reach out to Paved Digital to see if our 3-month CRO Accelerator is right for your team.

Head of Digital Strategy

Tien leads digital strategy at Paved Digital, bringing extensive experience in driving user-centric, data-led ecommerce growth. She connects business objectives with technology, leading discovery workshops and shaping strategies across SEO, GEO, data, personalisation and experimentation to deliver clear, outcome-driven roadmaps with real business impact.

Stay informed with the latest company news, industry trends, and digital innovation tips.

VWO Pulse brings survey feedback into the same platform where your experiments run. Here's how the closed loop works and why it changes what gets tested.

Most A/B testing programs fail not because they run bad tests, but because they're set up to accumulate results, not knowledge. Here are the key lessons from our webinar, in partnership with VWO.